AWS Cloud Data Engineering Tech Stack by Jim Macaulay

AWS Cloud Data Engineering Tech Stack

Published 10/2022

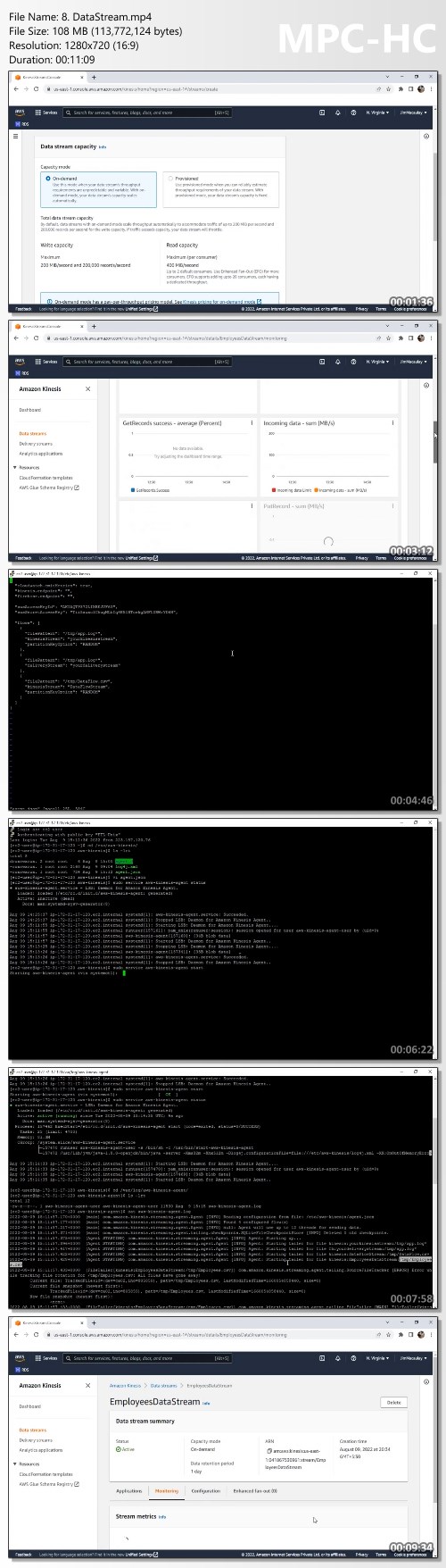

MP4 | Video: h264, 1280×720 | Audio: AAC, 44.1 KHz, 2 Ch

Genre: eLearning | Language: English | Duration: 68 lectures (3h 40m) | Size: 1.78 GB

Learn AWS Glue | DataBrew | Athena | Kinesis – Integrating with Redshift | PostgreSql RDS | S3 | Firehose | Glue Catalog

What you’ll learn

AWS Cloud Data Engineering

Data Engineering Tools

ETL, Analytical, Querying and Streaming

Complete code Data Engineering tools in AWS Cloud Infrastructure

Requirements

No programming experience needed

Description

This course is useful for,

ETL Developers

Data Engineers

ETL Architects

Data Migration Specialists

Database Administrators

Database Developers

Data integration is the process of preparing and combining data for analytics, machine learning, and application development. It involves multiple tasks, such as discovering and extracting data from various sources; enriching, cleaning, normalizing, and combining data; and loading and organizing data in databases, data warehouses, and data lakes. These tasks are often handled by different types of users that each use different products.

We will be working with the data platforms such as,

Data stores

Amazon S3

Amazon Relational Database Service (Amazon RDS)

Third-party JDBC-accessible databases

Data streams

Amazon Kinesis Data Streams

Firehose

Glue data catalog

Classifiers

Crawlers

AWS Data Engineering ensures fast querying to run Data Analytics on a massive volume of data and feed data to different Business Intelligence Tools, Dashboards, and other applications.

Data engineers work in a variety of settings to build systems that collect, manage, and convert raw data into usable information for data scientists and business analysts to interpret. Their ultimate goal is to make data accessible so that organizations can use it to evaluate and optimize their performance.

ETL, which stands for extract, transform and load, is a data integration process that combines data from multiple data sources into a single, consistent data store that is loaded into a data warehouse or other target system.

Who this course is for

ETL Architects

Cloud Data Engineers

Cloud Engineers

ETL Developers

Cloud Data Integration Specialists

Data Architects

Cloud Data Warehouse Developers

Homepage

https://www.udemy.com/course/aws-cloud-data-engineering/